You have a three-minute screen recording of a conference attendee pane. A thousand people scrolled past.

No API. The app doesn't have one.

No scrapable HTML. The app's an iPad build.

No export. You asked. They said no.

Just pixels.

This situation comes up more than you'd think. Webinar attendee panes. Closed portals. Cvent rosters. CrowdCompass. Any directory somebody could only capture visually. And every time, the instinct is the same: ship the video to a vision model, ask for a spreadsheet, move on.

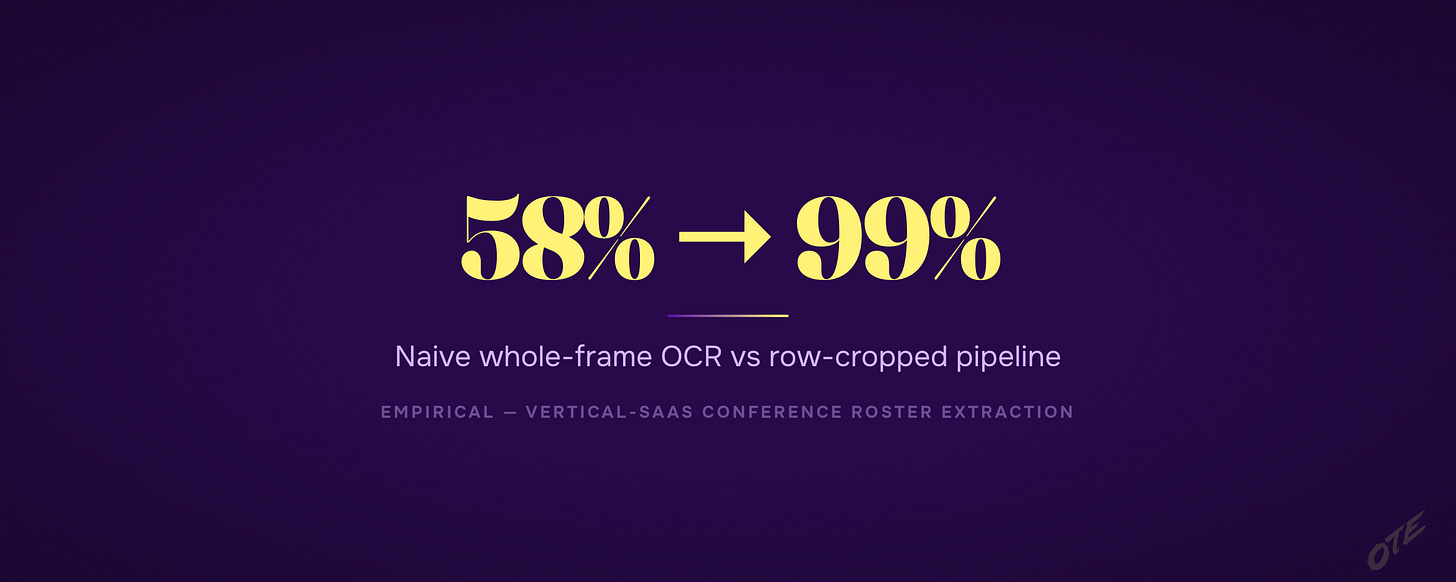

That instinct is wrong by about 40 percentage points.

The pixels-only rule

If the only copy of the list you can get is a video or a screenshot — no API, no web page you can scrape, no export button — you can't just hand the whole video to a vision model and hope. You have to break the video into pictures of one person at a time and read them one at a time.

That's the whole rule. Everything below it is why.

Why handing the whole video to a vision model fails

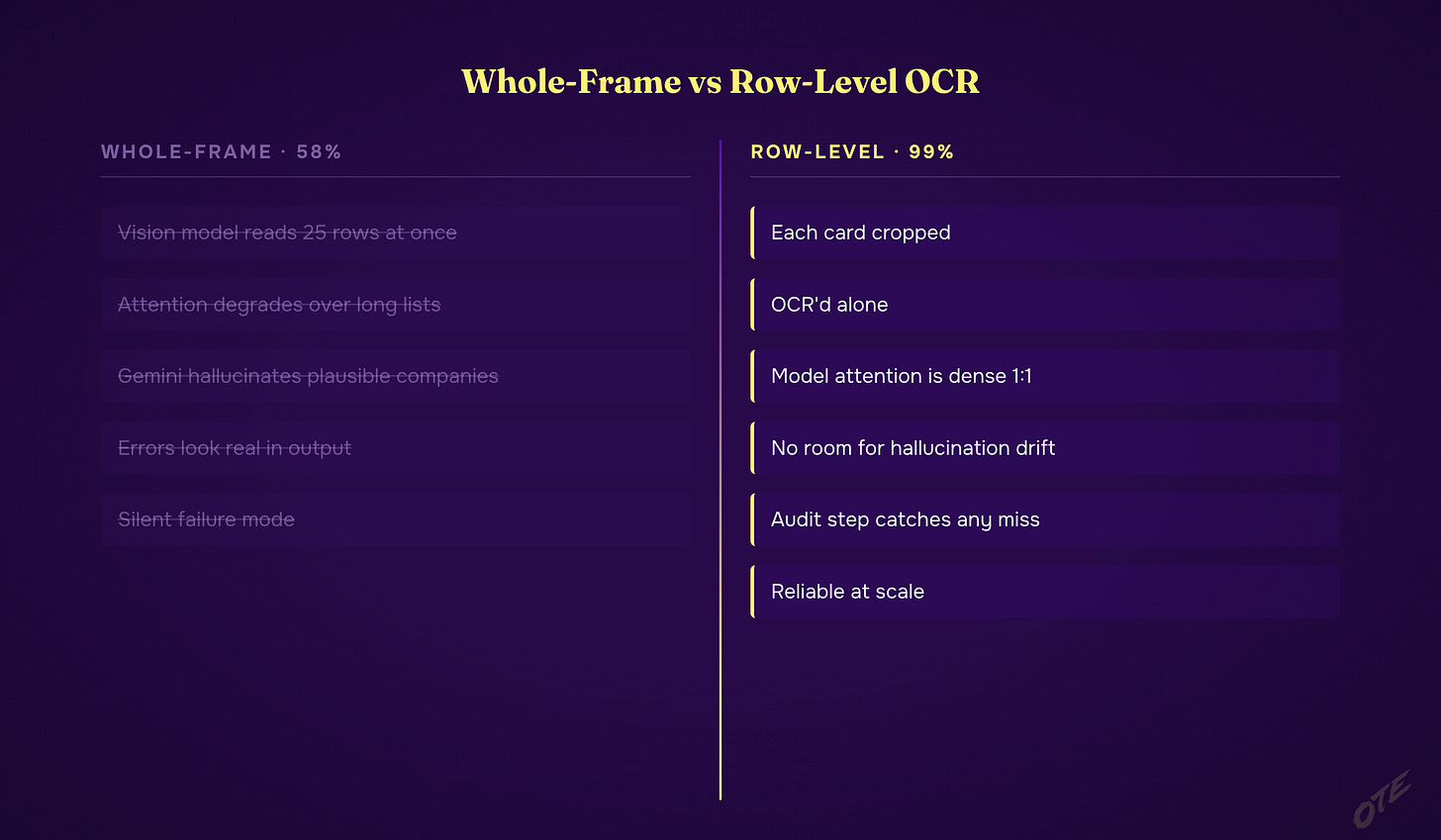

Show any vision model (Claude, Gemini, GPT-5) a single frame of the video — a screen with 25 rows of contact data on it — and ask for the whole list as a spreadsheet.

You'll get about 58% accuracy.

This is measured, not guessed. The model's attention thins out over a long list. And Gemini specifically will invent plausible-sounding fake companies — labeling someone "Dallas Fire & Rescue" when they actually work at an ambulance service three states away. The output reads like the real thing. It isn't.

The worst part: you can't spot the fake rows by eyeballing the spreadsheet. They look exactly like the real ones. The only way to catch them is to compare a sample back to the original video — and if you knew to do that, you'd already be halfway to the fix.

The fix: read one person at a time

Cut each row out of the frame as its own little picture. Read those little pictures one at a time instead of handing the whole frame over at once. Accuracy jumps to 99%.

The tradeoff is compute — you're now reading a thousand small pictures instead of thirty big ones — but you can run a bunch of Claude agents in parallel, so the wall-clock time is about the same. Ten minutes either way.

Why it works: when a model is looking at 25 rows at once, its attention is spread thin. When it's looking at one row in isolation, its attention is dense. A single row on screen has nowhere to drift.

Who Gets This

Most people run the video through Gemini, accept the 58%, and ship garbage lists. The move is a boring one-row-at-a-time pipeline that's been tuned on real runs.

Free: the pixels-only rule, why reading the whole frame fails, and why Gemini in particular invents fake companies.

$50/mo (most readers start here): the seven-step build, the exact thresholds that work, the de-duplication gotcha that breaks every first attempt, the agent-dispatch pattern, the audit step you can't skip.

$2,499/yr: Every tool I ship. Edge Copilot is how you talk to all of it through Claude Code. Current tools: Edge Copilot, AutoClaygent, Agent 7, Who to Target and What to Say, Blueprint Cloud, Technology Finder, Video List Extractor. Whatever ships next is included. Plus all 3 courses + weekly office hours.