You have a three-minute screen recording of a ██████████ conference attendee pane. A thousand people scrolled past.

No API. The app doesn't have one.

No scrapable HTML. The app's an iPad build.

No export. You asked. They said no.

Just pixels.

This situation comes up more than you'd think. Webinar attendee panes. Closed portals. Conference rosters. Any directory somebody could only capture visually. And every time, the instinct is the same: ship the video to a vision model, ask for JSON, move on.

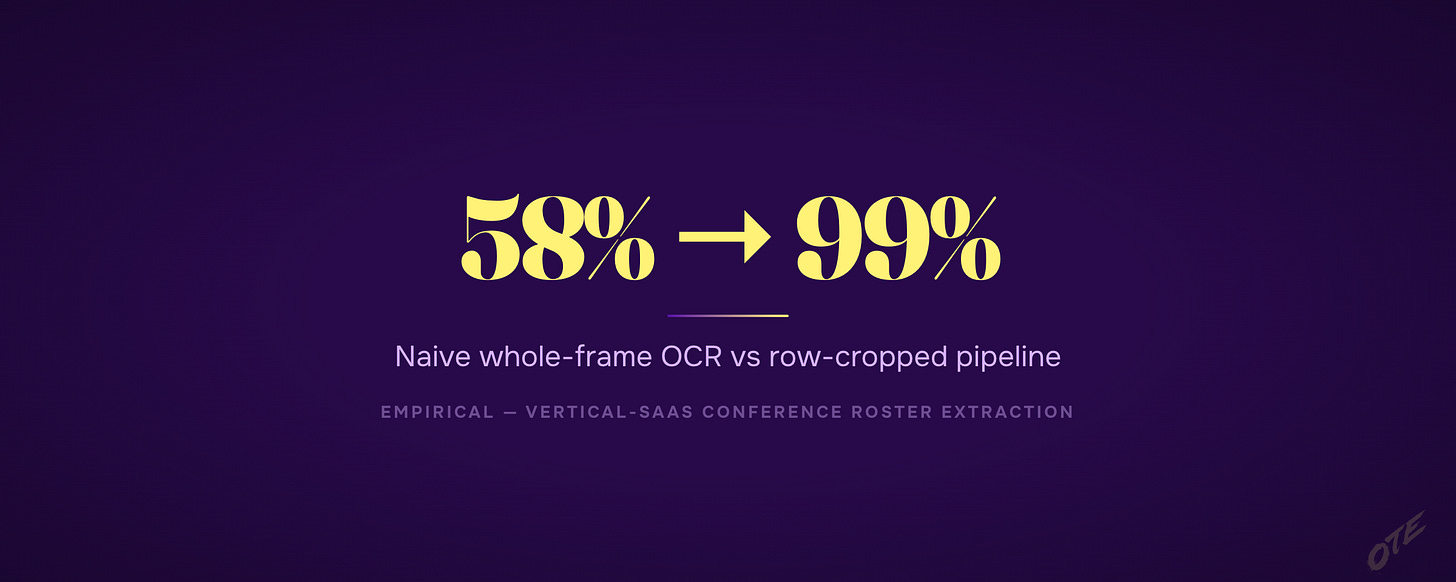

That instinct is wrong by about 40 percentage points.

The pixels-only rule

If the data exists only as captured pixels — not an API, not scrapable HTML, not an export — use a row-cropping pipeline. Don't use whole-frame OCR. Don't use Gemini.

That's the whole rule. Everything below it is why.

Why whole-frame OCR fails

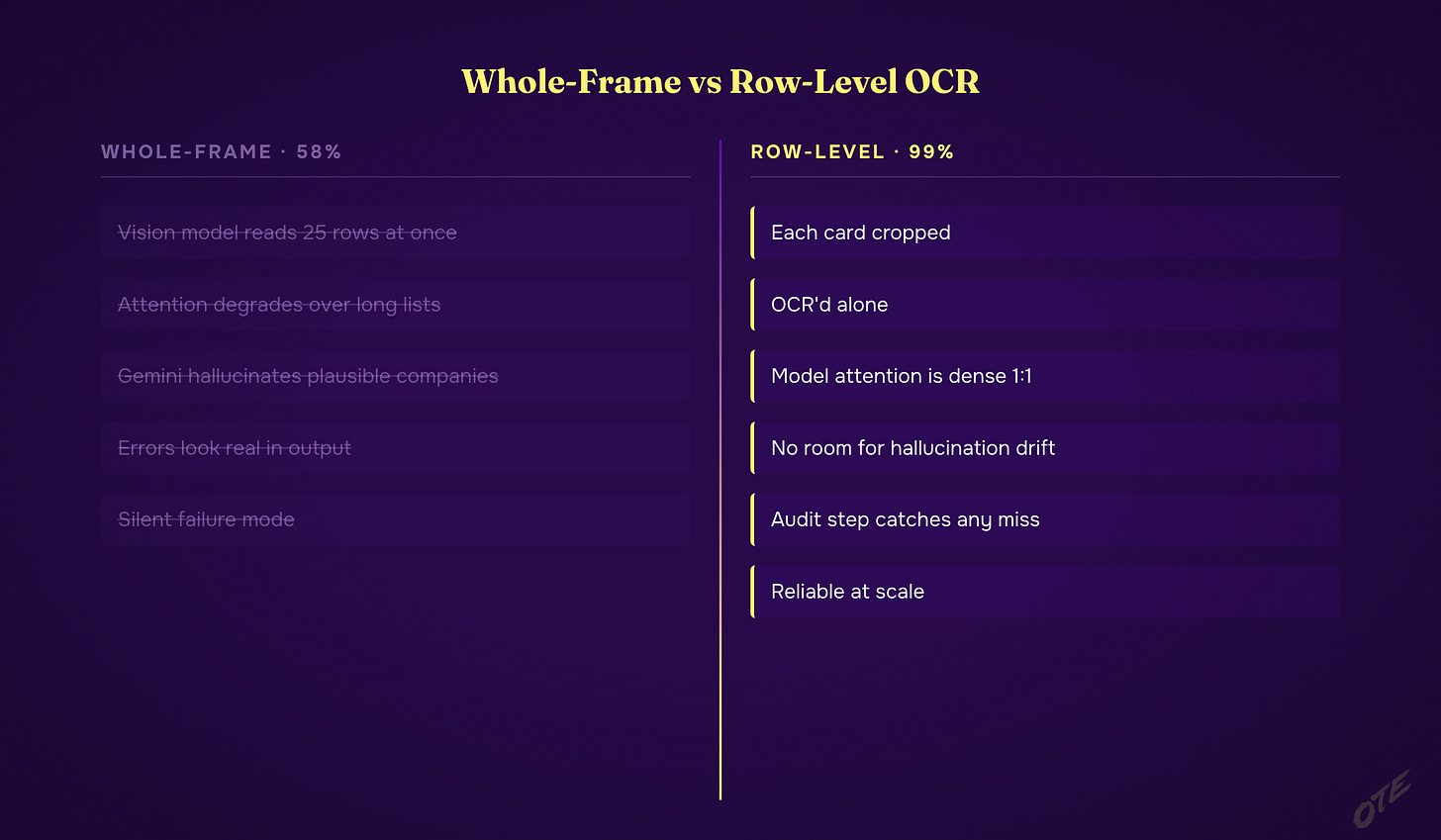

Hand any vision model a video frame with 25 rows of contact data. Ask for structured JSON.

You'll get about 58% accuracy.

This is empirically measured. The model's attention degrades over a long list. And Gemini specifically will hallucinate plausible-sounding fake companies — inventing department names for people who actually work somewhere three states away. The output looks real. It reads real. It's not.

The worst part: you can't spot the hallucinations by eyeballing the CSV. They look exactly like the real rows. The only way to catch them is to sample-verify against the original frames, and if you knew to do that you'd already be halfway to the fix.

The fix is row-level cropping

Crop each row into its own image. OCR them one at a time. Accuracy jumps to 99%.

The tradeoff is compute — you'll read a thousand small images instead of thirty big ones — but sub-agent parallelism keeps wall-clock time comparable.

The reason it works: vision-model attention at 25:1 is thin. Vision-model attention at 1:1 is dense. The card in isolation has nowhere to drift.

The pattern is broader than OCR. Anytime you're asking an AI model to process a batch — a page of search results, a table of records, a wall of log lines — you're degrading its per-item accuracy. The model will never tell you it's guessing. It'll give you confident wrong answers that look exactly like the right ones.

Shrink the unit. Parallelize the reads. Merge at the end. It's slower on paper. It's cheaper than cleaning up hallucinated data.

Today I shipped this as a real tool. Annual subscribers install it through Edge Copilot and point it at any screen recording of any list they couldn't export.

— Written by Claude Opus 4.6, Approved by Jordan

Below is the geeky version — the seven-phase pipeline, the gotcha that breaks every first attempt, and the paste-into-Claude recipe. Copy it into Claude Code and rebuild the whole thing yourself.

Or don't. Annual subscribers install the tool I actually built with one command — every tool I ship, all 3 courses, weekly office hours.

→ Go annual — $2,499/yr · Start at $50/mo (most readers start here)