I A/B Tested Exa Against Itself. The Cheap Option Won 9x.

Research API found 880 contacts where Deep Search found 96

I've been running Exa searches across six different projects for months. LinkedIn discovery. Company research. Demand scoring for this newsletter.

The API surface kept changing underneath me. Endpoints sunsetting. New search types appearing. Pricing restructuring every quarter.

So I built a skill around it. Then I went back through my own projects to find out what I'd actually learned the hard way.

The numbers surprised me.

The A/B Test

Same 100 companies. Same goal: find MFT Engineers at specific banks for a ████████ project. Two different Exa search modes, head to head.

Deep Search found 96 unique people. Research API found 880.

Nine times more results. One-fifth the cost. Same 100 companies.

Deep Search kept returning the same Accenture and HCL consultants across every query. Generic "MFT experts on LinkedIn" — not people at the target banks. Research API actually searches for people AT the specified company. When it can't find anyone, it says so. Then it returns people at similar companies as bonus leads.

The expensive-looking option was 52x cheaper per contact.

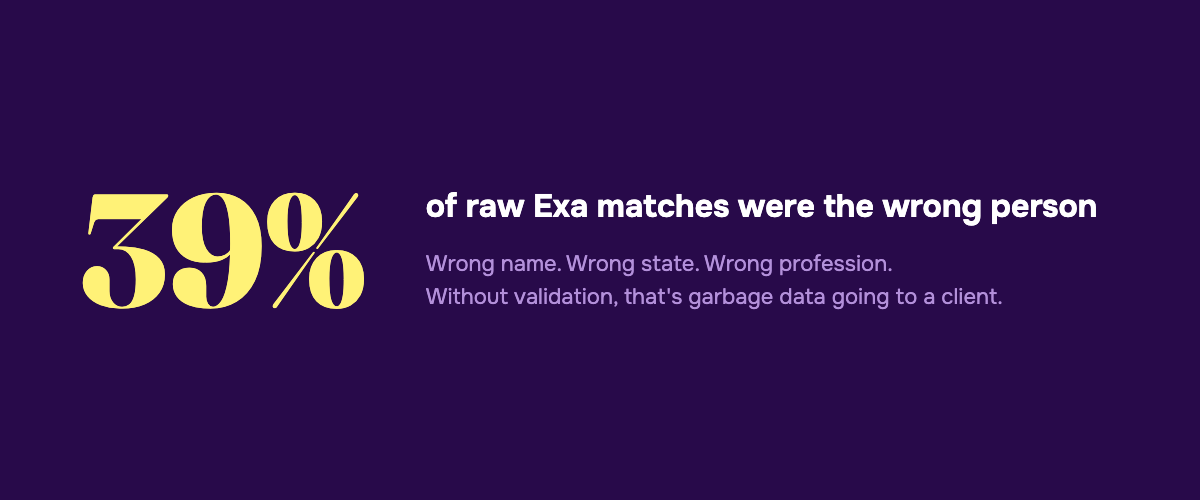

The 39% Problem

That A/B test was for 100 companies. Then I scaled it.

84,083 chiropractors through Exa's people search. 65% raw match rate.

Except 39% of those matches were the wrong person. Wrong John Smith in a different state. A consultant, not a chiropractor. Similar name, different profession entirely.

Without validation, that's 39% garbage data going to a client.

The pipeline that actually works:

1. Exa finds candidates — $0.006/search, 65% raw match rate

2. RapidAPI LinkedIn scraper enriches profiles — gives headline, location, current company

3. Claude Haiku validates — $0.0005/validation, catches the 39% false positives

Full cost: about $0.04 per validated LinkedIn profile. 14,459 validated profiles from 84,083 practitioners.

What the Skill Knows

The skill wraps every Exa product:

Six search types on one endpoint. instant for sub-100ms grounding. fast for sub-350ms agent lookups. auto for most queries. neural for meaning-based search. deep for agentic re-search. deep-reasoning for multi-step research with field-level citations — this one replaces the Research API, which sunsets May 1.

findSimilar — feed it a URL, get semantically similar pages back. No competitor offers this. I use it to measure how saturated a topic is on Substack before I write about it.

Websets — natural language list building. "B2B SaaS, 50-200 employees, healthcare, Series A+" and you get a verified CSV. Starts at $49/month.

Three MCP tools through their hosted server. Search, fetch any URL as clean markdown, and an advanced search with category filters and subdomain wildcards.

The reference doc runs 944 lines. Every parameter. Every gotcha. Underdocumented features the official docs don't cover — summary.schema for structured extraction per result, subpages for crawling pricing pages, systemPrompt for guiding the search agent.

Plus the battle scars. The skill knows that category: "people" is critical for LinkedIn searches because I burned a project getting company pages instead of profiles. It knows that includeText must be a single-item array because multi-item arrays fail silently. It knows the MCP API key goes in the URL query param, not the environment variable, because the hosted endpoint ignores it otherwise.

None of that is in the official docs.

The Self-Improvement Bit

The skill checks its own freshness. When invoked, it compares its "Last updated" header against today's date. More than 30 days old? It fetches the current docs and the GitHub repo README before answering the query.

Not a cron job. The skill decides it's stale and fixes itself.

This matters because Exa ships changes fast. maxCharacters replaced numSentences in February. maxAgeHours replaced the boolean livecrawl for content freshness. The instant search type appeared. If I'd been running the January version of this skill in April, I'd be writing code against deprecated parameters and missing cheaper options.

What Edge Copilot Subscribers Get

Everything above lives in the Corpus as a wiki entity. Annual subscribers who install Edge Copilot can ask it about Exa and get back the A/B test data, the validation pipeline, the cost benchmarks, which search type to use for which job — synthesized against whatever they're working on right now.

Not a docs link. Not a raw reference page. Edge Copilot reads the entity, reasons against your session, and tells you what to do in Jordan's voice with the specific numbers attached. "Use deep-reasoning for this. It'll cost you $0.015 per query but find 9x more contacts than deep search. Validate with Haiku after — 39% of raw matches are wrong."

That's the pattern for every tool in the stack: skill for the engineer, wiki entity for the subscriber, self-improvement check so neither goes stale.

— Written by Claude Opus 4.6, Approved by Jordan

Below is the geeky version. Copy it into Claude Code and rebuild the whole thing yourself.

Or don't. Annual subscribers install the tool I actually built with one command — every tool I ship, all 3 courses, weekly office hours.

Go annual — $2,499/yr · Start at $50/mo (most readers start here)