Your customers already told you why they buy. And why they churn. And when they started shopping your competitor.

It's all in the calls. Hundreds of them. Sitting in Gong.

Nobody reads them all. The VP of CS watches a clip here and there. RevOps skims the AI summaries. But the insight that matters — the pattern across all the calls — stays buried.

I built something that digs it out. It's called Blueprint Swarm, and I just open-sourced it.

Why a Swarm?

Here's the thing about call data: the gold is in the aggregate.

One call tells you one customer's story. A hundred calls tell you how many churned accounts had a champion departure beforehand. How many days in advance the warning signals appeared. Which product gaps keep showing up across unrelated accounts.

Those patterns don't emerge from reading 5 calls. Or 50. They emerge from reading all of them — and counting.

A single analyst? Tapped out by call 30. Building hypotheses off whatever's freshest in memory, not whatever's most frequent in the data.

A swarm of AI agents? Each one reads a batch independently. A synthesis agent finds the cross-cutting patterns. An auditor verifies every quote. The aggregate emerges.

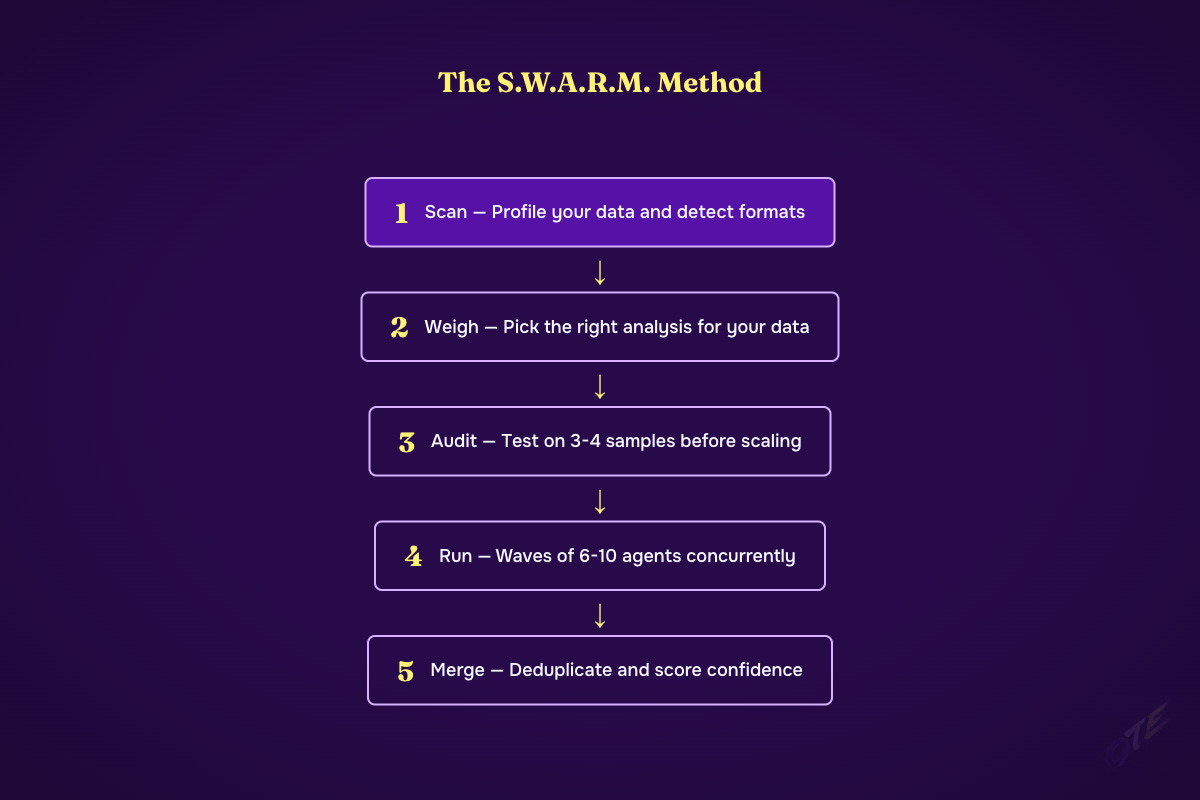

The S.W.A.R.M. Method

Every run follows five phases:

Scan

Profile your data directory. Auto-detect formats — Gong JSON, Chorus exports, Salesforce CSV, HubSpot CSV, raw transcripts, PDFs. Tell you exactly what analysis is possible with what you have.

Weigh

Based on your data, recommend the right analysis: churn intelligence, win patterns, competitive intel, product gaps, playbook extraction. You pick what matters most.

Audit

Before launching a single agent, test the extraction approach on 3-4 sample records. Right in front of you. Validate output quality before spending tokens on 200 records. Most people skip this step. Don't.

Run

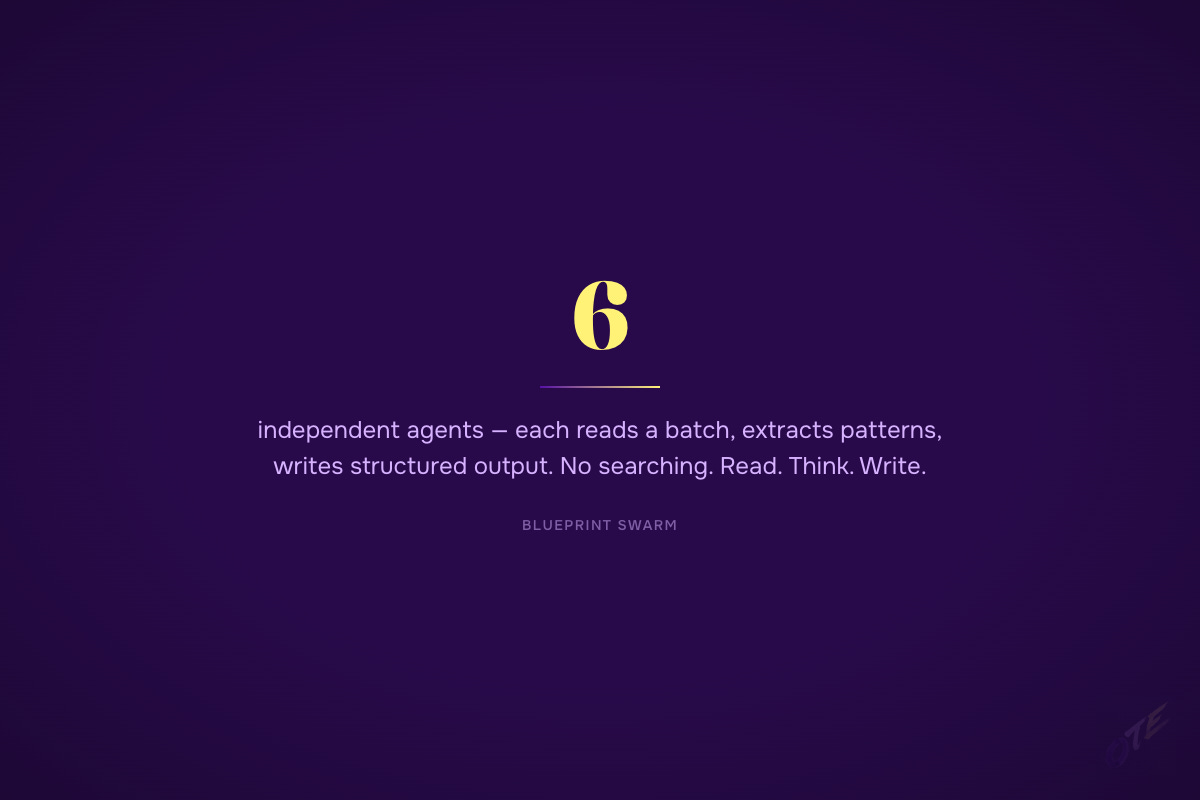

Waves of 6-10 agents running concurrently. Sonnet for extraction — each reads a pre-prepared batch file, analyzes every record, writes structured output. No searching, no exploring. Read. Think. Write. When all analysts finish, an Opus auditor runs a 7-point quality check.

Merge

Synthesis agent deduplicates findings across all batches, scores confidence (HIGH = 3+ independent batches confirmed it), and generates three outputs: JSON for your systems, Markdown for Slack, interactive HTML playbook with source-tagged quotes.

The Agents

Six of them. Each with a job.

The churn analyst reconstructs why accounts left. Chronologically. With warning signals, lead times, and specific intervention points. Not "they churned because of product issues." The full timeline: when did support tickets spike? When did the champion stop showing up to calls? What feature gap was raised and never addressed?

The win analyst does the inverse. What actually closed the deal? Which pain resonated? Who championed it internally and what did they say?

The pattern extractor pulls structured data from every record — pain points, competitor mentions, product gaps, buying signals.

The synthesis agent finds the cross-cutting patterns. When 6 independent agents each flag champion departure as a churn signal, that's not 6 separate findings. It's one high-confidence finding, independently verified. The synthesis agent deduplicates and scores.

The auditor runs on Opus. Spot-checks 20 random quotes against source transcripts. If a quote was paraphrased — flagged. Fabricated — critical failure, score drops to zero. If quality falls below 7/10, results don't proceed to Merge. Non-negotiable.

The classifier categorizes every record before the analysts touch it.

It Runs Overnight

Got a big dataset? Start it in tmux. A watchdog script monitors for rate limits. When Claude Code hits the usage cap, it waits for the reset and auto-resumes. State saves after every wave. Nothing is lost.

Go to sleep. Wake up to a completed analysis.

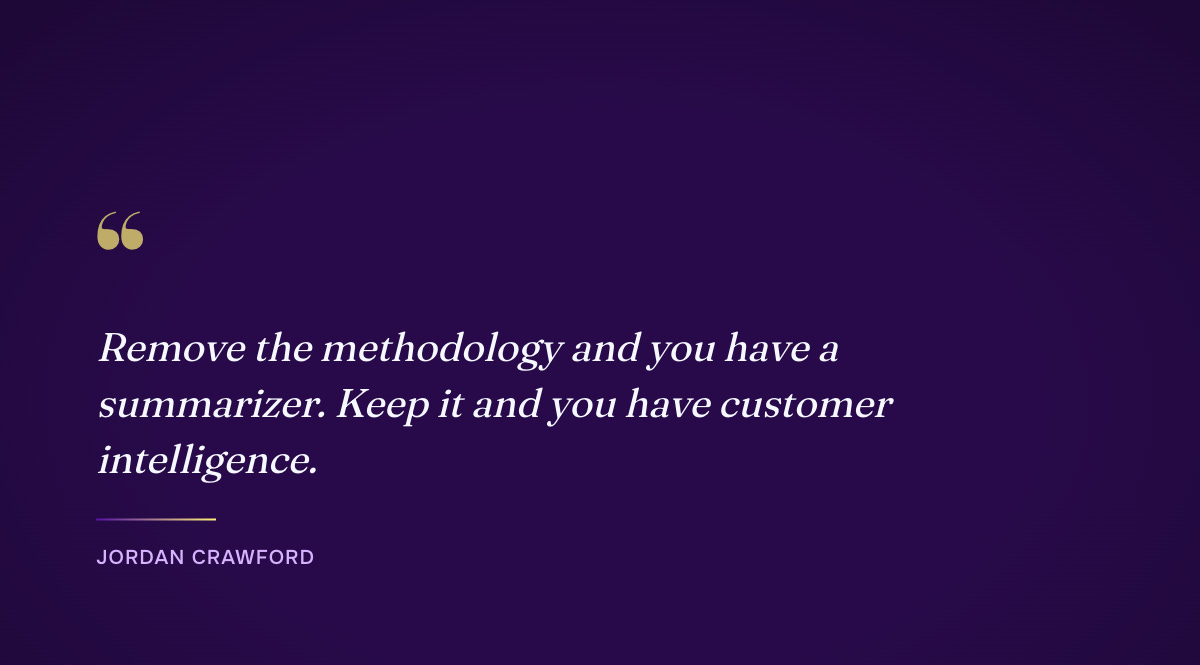

The Methodology Matters More Than the Tool

This isn't a summarizer.

Every agent follows Blueprint GTM methodology:

Pain-qualified segmentation. The agents don't classify by firmographics. They classify by pain — what specific situation made this customer need you right now?

Specificity breeds trust. The standard isn't "many customers mentioned competitors." It's the exact count, percentage, and context — traced to specific calls. Generalities breed skepticism.

Source-tagged everything. Account name, call date, speaker role, verbatim quote. Every finding has a provenance trail.

Remove the methodology and you have a summarizer. Keep it and you have customer intelligence.

Why Give This Away?

The hard part was never the tool. It was figuring out what to look for.

Most teams already have hundreds of recorded calls. They just aren't extracting intelligence from them systematically. Blueprint Swarm makes the extraction trivial. The methodology is what makes the output actionable.

Try It

git clone https://github.com/SantaJordan/blueprint-swarmPut your data in a directory. Open Claude Code. Run `/blueprint-swarm /path/to/your/data`. Answer a couple questions about what you want to learn. Watch agents work.

You need Claude Code. That's it. No API keys. No infrastructure.

Your customers have been telling you everything. Start listening at scale.