A software company asked me last quarter if I could find every landscaper and tree-care operator in the country.

They'd been buying lists from the usual aggregators for two years. The reps kept asking why the same companies showed up over and over. Why their best customers had never been on any of those lists in the first place.

So I ran my TAM research skill against the vertical. One pass.

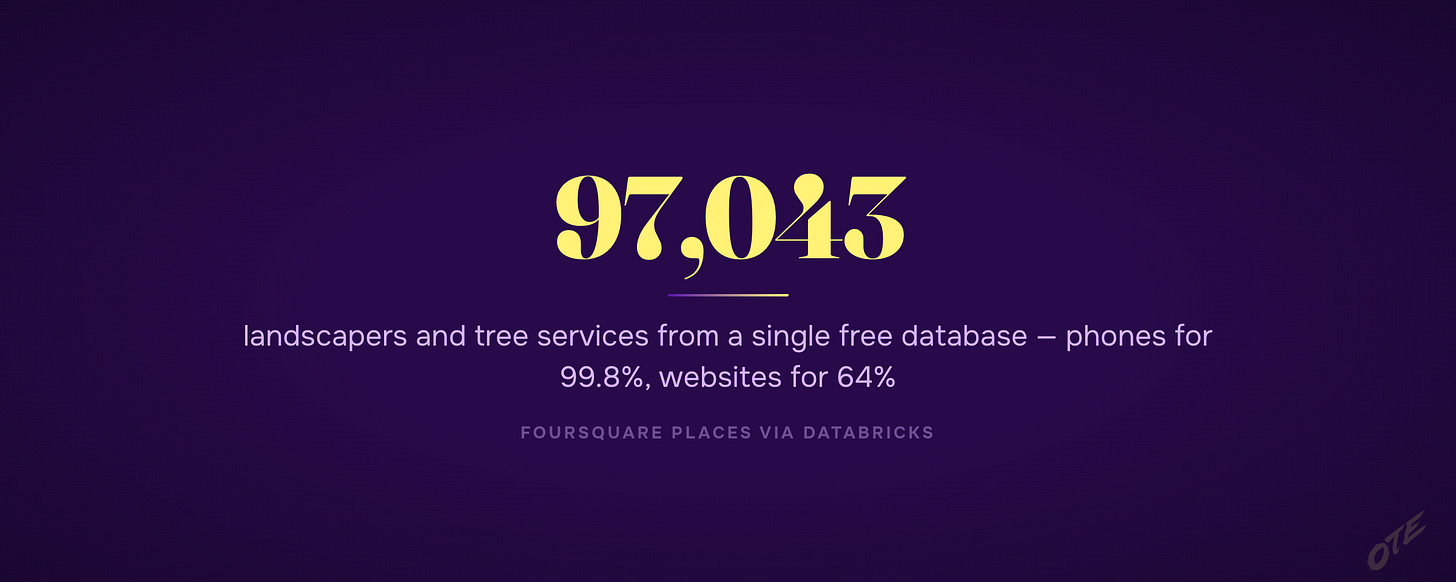

38 sources surfaced. Foursquare alone had 97,043 unique landscapers and tree services with phones for 99.8% of them and websites for 64%. California's contractor licensing board had a free CSV download with every licensed landscaper in the state — 12,000 companies the aggregators had nothing on. Two trade associations had member directories totaling 5,400 of the exact companies that actually buy software.

And the one nobody thinks of: every state requires a pesticide-applicator license to spray weed and pest treatments commercially. Almost every professional landscaper holds one. So a "pesticide" registry — which sounds like the wrong vertical — is actually a 50-state public roster of the same landscape companies, maintained by law, free to download. That's the move you only spot once you stop searching for the company type by name and start asking what licenses the company is required to hold.

Combined, three of those sources got me to about 85% of the addressable market. For around $0 in data costs.

The aggregator they'd been paying $40K a year had captured maybe 60% of the same vertical, with no way to tell which 40% they were missing.

Why Most TAM Lists Miss the Market

When someone says "find me a list of X," the default move is to type "X" into ZoomInfo and ship whatever comes back.

That's not TAM research. That's giving up on 40% of your market and hoping the other 60% is enough.

Aggregators sell you the companies they happen to have. Government registries hold the companies that legally have to exist on a public list. Trade associations hold the ones that paid to be findable by buyers. Google Maps holds the ones that wanted local customers to walk in.

Those are different lists. They overlap maybe 50% with each other. Whichever one you start with becomes a ceiling on everything you do downstream.

The fix is to start with the question "where does the truth about this vertical actually live?" before you build the list. Most teams skip that step entirely.

What the Skill Actually Does

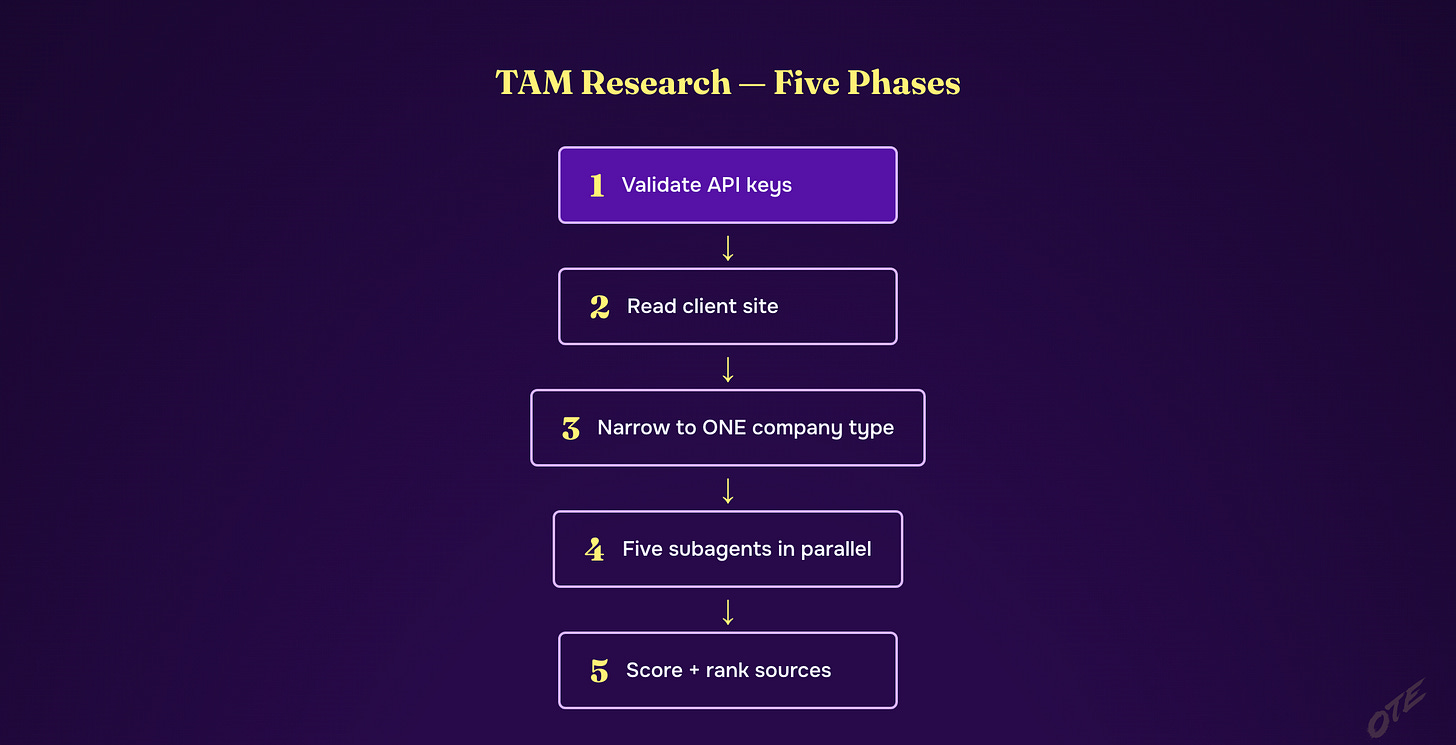

It runs five phases, in order, on any company type you point it at.

Phase zero is the kill switch. It validates every API key the run depends on before doing any work. Five keys, one fails, the whole thing stops. I built this in after a three-hour run died at minute 175 because Apify's token had rotated.

Phase one reads the client website silently. No questions to me yet. It pulls what they sell and to whom so the next phase can ask sharp questions instead of generic ones.

Phase two narrows. This is the only phase where it talks to me. Always to one company type. "Healthcare providers" is not a company type. "Ambulatory surgery centers" is. "Direct primary care clinics" is. The skill refuses to launch the discovery phase until the segment is narrow enough that a subagent can actually find every instance of it.

Phase three is five subagents in one shot. Federal registries. State licensing boards. Industry associations and niche directories. Google Maps and local business APIs. Open data and web datasets. Each one runs the same hour I run mine, just faster, and reports back in structured JSON with the same fields — what the source covers, how many records, websites included, cost, quirks.

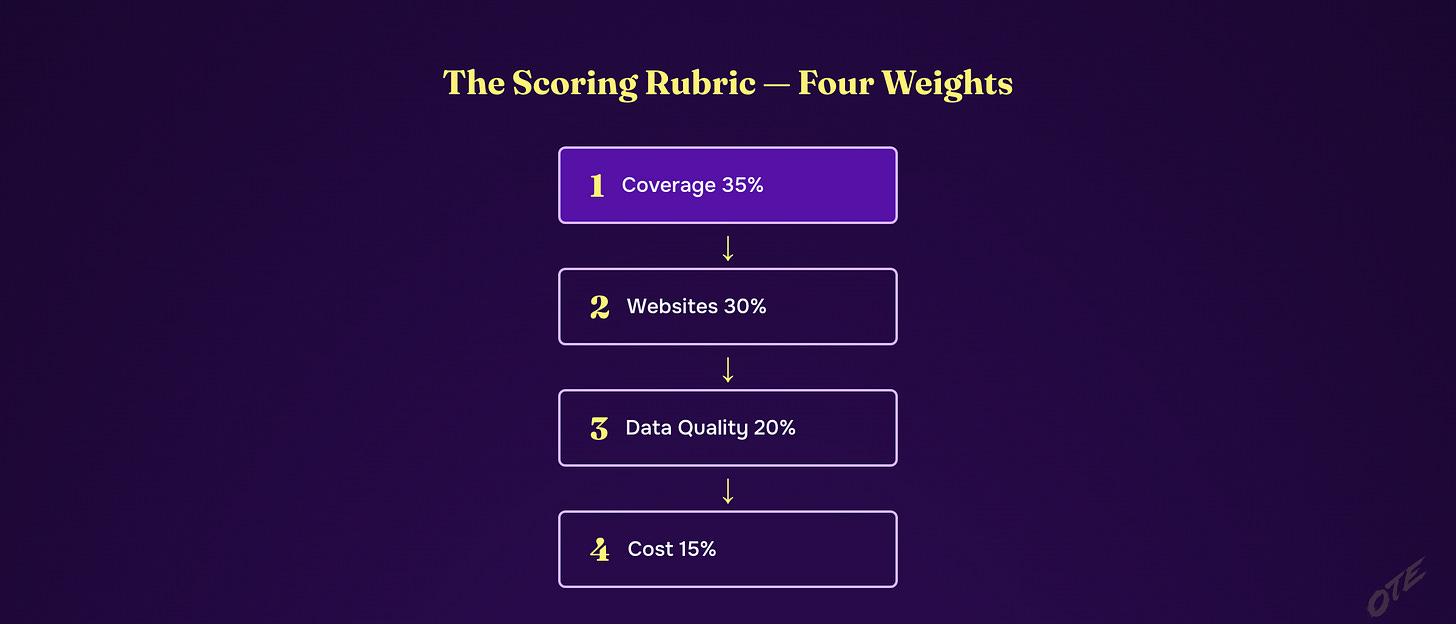

Phase four scores everything. Same rubric across all 38-ish sources. Coverage 35% of the score. Websites included 30%. Data quality 20%. Cost 15%. Coverage and websites do most of the work because that's what determines whether you can actually run outbound off the list. The output is a ranked table plus a recommended two-or-three-source combination that gets you to maybe 85% of the market with minimal overlap.

The whole thing takes about 45 minutes and costs me $2 to $10 in API calls.

The Rubric Does the Hard Part

The thing that makes this work isn't the subagents. It's the rubric.

Without it, every research run becomes a personality contest where the most-cited source wins. With it, you can say "this licensed-contractor database covers 100% of the regulated population, has zero website fields, and is government-mandated, so it scores 70 — and Google Maps scores 74 because it has 80% coverage but every record has a website." Then you stop arguing.

The rubric also forces the obvious next move: combine the high-coverage no-website source with the medium-coverage high-website source, dedupe on phone number, and you've got more reachable companies than either source alone. That combination is what the recommendation table gives you.

Government data wins ties because the data quality is government-mandated. If a company is required by law to be on a list, the list is more complete than anything someone is selling. Most teams forget this.

Why I'm Releasing It Now

Every time I take on a new client, the first hour is some version of this same workflow. Pull their site. Narrow the segment. Fan out across federal, state, association, local, and open-data sources. Score what comes back. Pick the two or three sources that get me to coverage. Hand the build off.

Doing it five times burned the methodology in. Doing it twenty times made me write the skill. Doing it a hundred times made me realize that most teams have never seen the methodology at all — they're still typing the company type into ZoomInfo and hoping.

So it's going up.

— Written by Claude Opus 4.7 (1M context), Approved by Jordan

Below is the geeky version. Copy it into Claude Code and rebuild the whole thing yourself.

Or don't. Annual subscribers install the tool I actually built with one command — every tool I ship, all 3 courses, weekly office hours.

→ Go annual — $2,499/yr · Start at $50/mo (most readers start here)