Your LLM writes 3,000 words. Your CRO reads for 30 seconds.

I built the editorial layer that closes the gap — five questions, an AI-tell lint, and a chart agent that mostly says "no chart." Shipped today.

I asked Claude to write me an exec brief on customer churn. It produced 3,214 words. The recommendation was on page 4. The action layer didn't exist.

This is what every LLM does. Generates. Piles. Buries the point under "comprehensive analysis." A CRO opens it, gives it 30 seconds, can't find what to do, closes the tab.

I rebuilt that brief by hand. Took the same 3,214 words and cut them to 1,180. Moved the recommendation to sentence one. Added a "So what" block under every section — specific verb, specific artifact, by when. Picked one chart out of the eight the LLM produced. Killed the rest.

The result was a 30-second read. Same insight. Cleaner.

I did this for the third time this week and stopped. The pattern was always the same. I was doing the same five operations every time:

1. Find what's important

2. Cut what doesn't need to be there

3. Surface what the reader will actually do with this

4. Specify how they take action

5. Reorder the whole thing so the answer is first

So I built it.

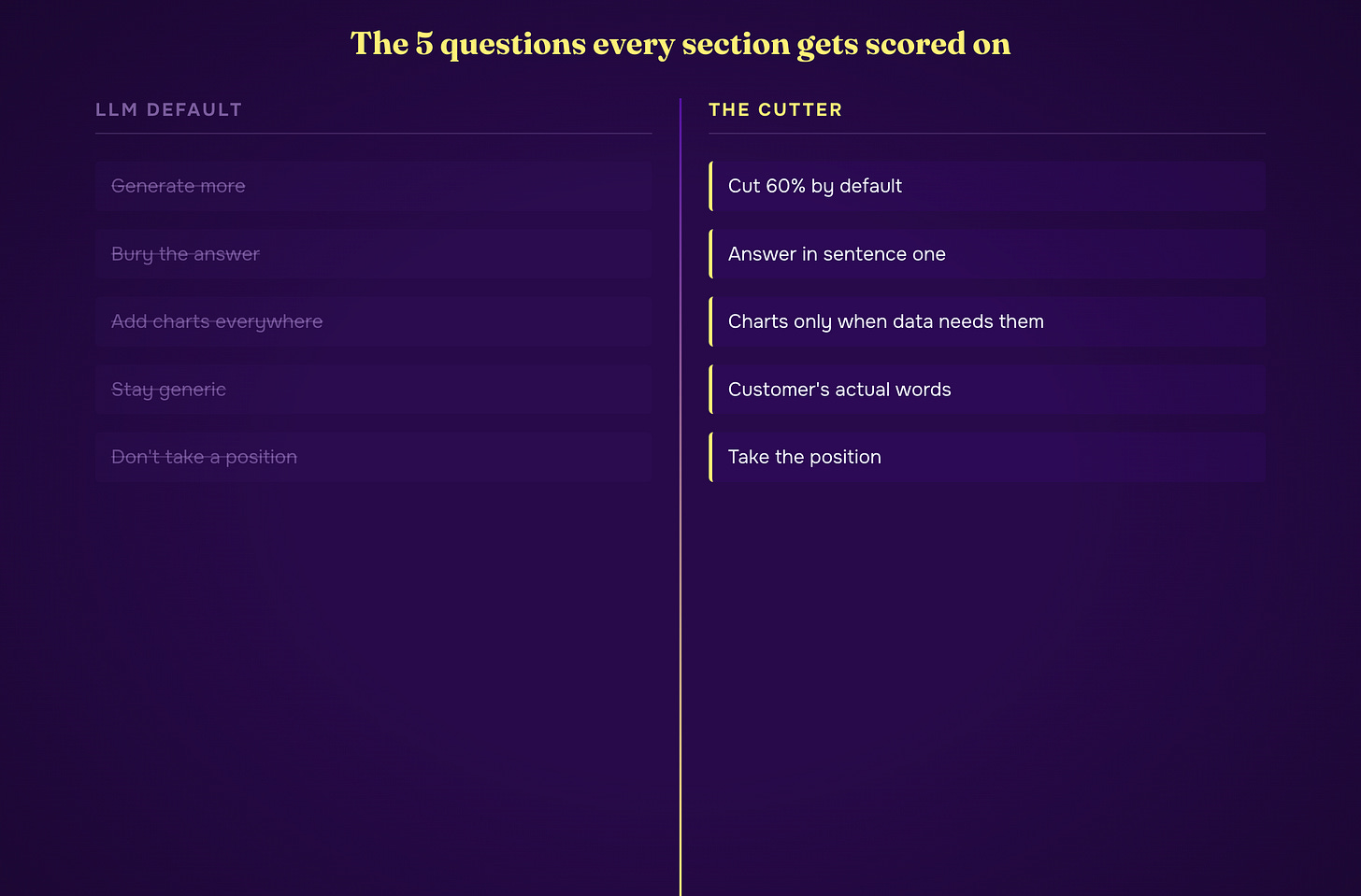

The five questions

That's the Editorial Frame. Every section of the input gets scored 1–5 on each question. Default verdict is `cut`. You only `keep` a section when it earns it.

The questions map to the canon every exec-comms expert agrees on. Tufte's data-ink ratio answers question one — what's important. Doumont's signal-to-noise answers question two — what needs to be there. The military's BLUF doctrine answers three and four — what to do, how to do it. Minto's Pyramid answers five — how it's layered, with the answer first.

That's the whole methodology. Five questions. No frameworks-on-top-of-frameworks. No PowerPoint templates. Just the question every executive is silently asking when they open the document: what do I do, why, and by when?

The lint engine

The skill runs lint on its own output. Not the kind you run on code. The kind that catches LLM tells.

Twelve patterns the model loves and a CRO hates. Any hard hit blocks publish:

reversal cliffhanger The X wasn't the Y. The X was the Z.

antithesis tic It is not just X, it is Y.

throat-clearing opener "In today's...", "As we all know..."

recap signature "In summary", "To recap", "Key takeaways"

filler transitions "Having said that", "With that being said"

rigid optimism close Despite-its-challenges-X-faces-an-exciting-future.

imagine-a-world opener "Imagine a world where..."

magic-adverb verbs "quietly transforms", "fundamentally changes"

vague attribution "studies suggest", "experts argue"

schema leaks Internal vocabulary in customer-facing prose

inline code chips Single-line backtick chips scattered through paragraphs

em-dash overuse > 0.15 per sentence (GPT-4.1 uses 3.3× the human rate)Plus the AI vocabulary — the words every LLM reaches for and no executive uses:

delve, leverage, robust, seamless, holistic, synergy, paradigm,

empower, harness, streamline, cutting-edge, best-in-class, ecosystem,

game-changer, unlock, elevate, disrupt, tapestry, landscape, notably,

moreover, furthermore, utilize, underscore, pivotal, transcend, navigate,

testament, realmAny hard hit fires the block. One auto-retry with the lint output piped back into the rewriter. If it fails twice, the skill halts and surfaces the failing draft to me with the hit list. I either edit or override with a written reason. Never silently bypass.

The chart agent

Most LLMs produce charts the way they produce prose. Too many. Mostly decorative. Sometimes misleading.

I gave the chart job to a dedicated sub-agent that reads Tufte first. Twelve rules, applied in order. First match wins:

Single number, no comparison → no chart (doesn't beat the sentence)

Two numbers, simple ratio → no chart (bold the numbers in prose)

Prose already carries the comparison ("37% vs 12%") → no chart

Time series, 5 points or fewer → sparkline inline

Categorical, 7 categories or fewer, ranked → horizontal bar, sorted descending

Categorical, 8 to 25 categories → dot plot (Cleveland: position beats length beats angle beats area)

Anything pie-shaped → bar chart. Always.

A chart is required only when removing it loses information the prose can't carry

The agent has read Cleveland on perceptual encoding. Stephen Few on chartjunk. Bertin on visual variables. Cole Nussbaumer Knaflic on attention. Alberto Cairo on truthful visualization. Not as decoration. As constraints.

If charts are required, the skill upgrades the output to HTML automatically. A warning prints. The reader gets the brief styled like the playbooks at playbooks.blueprintgtm.com.

What it doesn't do

It doesn't generate AI hero images. Stock-illustration energy has no place on an exec brief.

It doesn't add words to feel "complete." Length isn't depth. The hard cap is 60% of the original word count. Output bigger than 65% gets re-fired with "cut harder."

It doesn't write what the reader already knows. Methodology sections are the first thing the editor agent flags as `cut`.

It doesn't mention itself in the output. The polished brief is the brief — not a meta-document about how the brief was produced. The reader has never heard of this skill and doesn't need to.

The customer voice problem

Generic exec briefs feel like they could apply to anyone. The fix is the customer's actual words.

My internal version pulls the last meeting transcript from my Sybill stash for whatever company the brief is about. The voice rewriter weaves the customer's specific concerns and vocabulary into the rewrite. The CRO reads it and thinks "this is about us, not a template."

Subscribers don't have my Sybill access. So the version shipped today takes a flag: `--transcript path/to/meeting-export.md`. Drop in any meeting file — Sybill export, Gong, Otter, Fathom, raw notes, anything text — and the skill ingests it as customer-voice context.

The output lands as a local file. The polished markdown copies to your clipboard automatically. Paste into a Google Doc, Substack, email, Slack. Whatever your delivery surface is, it's already on your clipboard.

What I learned

Most "AI bloat" isn't an AI problem. It's an editorial problem we never solved.

Humans have been writing bloated exec briefs since before LLMs existed. The 60-page consultant deck for a one-sentence recommendation. The "executive summary" that's longer than the substance below. The chart that exists because the slide needed three charts.

What LLMs did is industrialize it. The bloat got cheap. The volume went up. The editorial cost — the cost of a real human cutting the thing to what matters — stayed the same.

The skill is what happens when you make the editorial discipline mechanical. Five questions, applied every time. Lint that blocks the LLM tells. A chart agent that knows when not to make a chart.

The 30-second window is the only thing that matters to your reader. Build for it.

— Written by Claude Opus 4.7, Approved by Jordan

Below is the geeky version. Copy it into Claude Code and rebuild the whole thing yourself.

Or don't. Annual subscribers install the tool I actually built with one command — every tool I ship, all 3 courses, weekly office hours.

→ Go annual — $2,499/yr · Start at $50/mo (most readers start here)